Criar um fluxo de trabalho agêntico leve

Caso de utilização para o mundo real: Estimar as pegadas de carbono utilizando a IA (!)

TL;DR para leitores não técnicos

We built a smart, interactive system to quickly estimate the carbon footprint of chemicals. Instead of filling out complicated forms, you can just type questions naturally (like asking the CO₂ emissions of shipping chemicals). The system uses advanced AI to understand your request, gathers the needed data (even chatting with you if necessary), and calculates accurate results transparently. This approach blends human-friendly interactions with reliable numbers, ensuring clear and trustworthy estimates.

Try it yourself! While this system is “under construction”, you can test the live prototype at https://agents.lyfx.ai. Espere algumas arestas à medida que continuamos a construir e a aperfeiçoar o fluxo de trabalho.

I have long learned that the gap between “we need to calculate something” and “we have a system that actually works” is often filled with more complexity than anyone initially expects. When we set out to build a quick first-order estimator for cradle-to-gate greenhouse gas emissions of any chemical, I thought: “How hard can it be? it is just a simple spreadsheet, right?”

Well, it turns out that when you want users to input free-form requests like “what is the CO₂ footprint of 50 tonnes of acetone shipped 200 km?” instead of filling out rigid forms, you need something smarter than a spreadsheet. Enter: agentic workflows.

Experimentei várias abordagens para criar fluxos de trabalho de agentes (desde camadas de orquestração personalizadas a outras estruturas), mas o LangGraph surgiu como a solução mais robusta para este tipo de interação híbrida homem-IA. Lida com a gestão do estado, interrupções e padrões de encaminhamento complexos com o tipo de fiabilidade de que se precisa quando se constrói algo que as pessoas vão realmente usar.

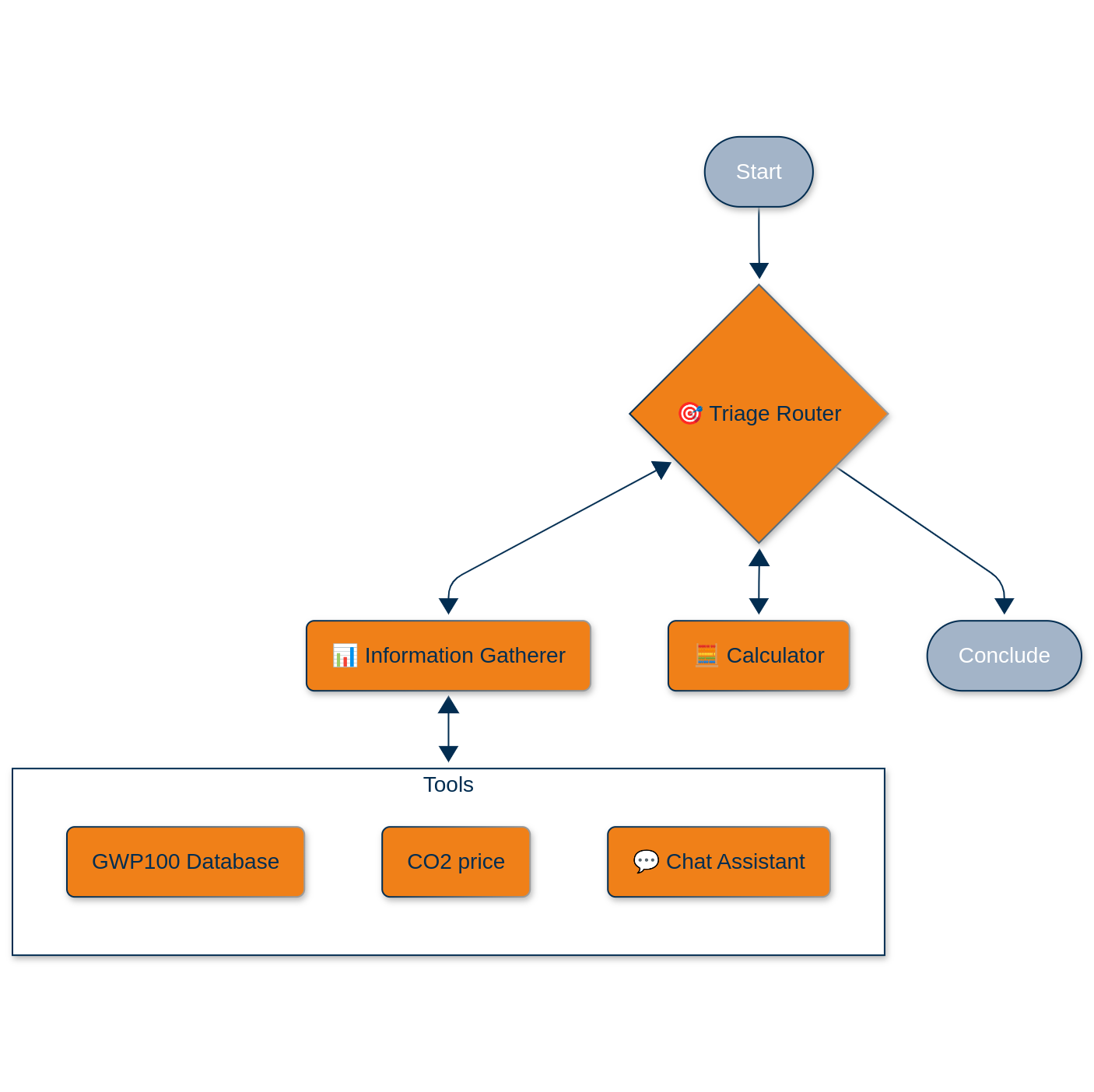

A Arquitetura: Caos Orquestrado

O nosso sistema pressupõe um ciclo de vida simplificado: produção num único local, transporte até ao ponto de utilização e libertação atmosférica parcial ou total. Mas a magia está na forma como lidamos com a confusa interação homem-IA necessária para reunir os parâmetros exigidos.

Here’s what we built using LangGraph como nosso mecanismo de orquestração, envolto em um Django aplicação servida via uvicórnio e nginx:

O agente do encaminhador de triagem

This is the conductor of our little orchestra. It uses OpenAI’s GPT-4o with structured outputs (Pydantic models, because type safety matters even in the age of LLMs) to classify incoming requests:

class TriageRouter(BaseModel):

reasoning: str = Field(description="Step-by-step reasoning behind the classification.")

classification: Literal["gather information", "calculate", "respond and conclude"]

response: str = Field(description="Response to user's request")O agente de triagem decide se precisamos de mais informações, se estamos prontos para calcular ou se podemos concluir com uma resposta. É essencialmente uma máquina de estados com um cérebro LLM.

O agente de recolha de informações

É aqui que as coisas se tornam interessantes. O agente é híbrido. Pode chamar ferramentas de forma programática ou encaminhar para um agente de conversação interactiva quando necessita de intervenção humana. As ferramentas disponíveis são:

Ferramenta da base de dados GWP: Instead of maintaining a static lookup table, we built an LLM-powered “database” that searches through our chemical inventory with over 200 entries. When you ask for methane’s GWP-100, it does not just do string matching; it understands that “CH₄” and “methane” refer to the same molecule. The tool returns a classification (found/ambiguous/not available) plus the actual GWP value.

CO₂ Price Checker: Currently a placeholder returning 0.5 €/ton (we are building incrementally!), but designed to be swapped with a real-time API.

Capacidade de conversação interactiva: When the information gatherer cannot get what it needs from tools, it seamlessly hands off to a chat agent. This is not just a simple handoff: we use LangGraph’s NodeInterrupt mechanism to pause the workflow, collect user input, then resume exactly where we left off.

O agente calculador

Este é o único agente que faz cálculos reais, e deliberadamente. É um agente ReAct equipado com duas ferramentas de cálculo:

@tool

def chemicals_emission_calculator(

chemical_name: str,

annual_volume_ton: float,

production_footprint_per_ton: float,

transportation: list[dict],

release_to_atmosphere_ton_p_a: float,

gwp_100: float

) -> tuple[str, float]:The transportation parameter accepts a list of logistics steps: [{‘step’:’production to warehouse’, ‘distance_km’:50, ‘mode’:’road’}, {‘step’:’warehouse to port’, ‘distance_km’:250, ‘mode’:’rail’}]. Each mode has hardcoded emission factors (road: 0.00014, rail: 0.000015, ship: 0.000136, air: 0.0005 ton CO₂e per ton·km) sourced from EEA data.

A matemática é intencionalmente simples: somar as emissões da produção, as emissões do transporte e os impactos da libertação na atmosfera, cada um calculado de forma determinística.

A pilha técnica: LangGraph + Django

Our system leverages LangGraph’s StateGraph along with a custom State class to maintain conversation context, collected data, and routing information as agents hand off to each other. During development, we rely on MemorySaver for in-memory persistence, but we’ll transition to SqliteSaver with disk-based checkpoints for production environments running multiple uvicorn workers.

Every agent delivers responses through Pydantic models, which provides type safety and mitigates the typical LLM hallucination problems around routing decisions. When the triage agent decides to “calculate,” it returns exactly that string rather than variations like “Calculate” or “time to calculate.”

O agente de conversação incorpora a funcionalidade NodeInterrupt para fazer uma pausa nos fluxos de trabalho quando é necessária a entrada do utilizador. O estado rastreia qual agente iniciou o chat através do campo caller_node, garantindo o encaminhamento adequado após a coleta de informações. Todo o fluxo de trabalho funciona dentro de uma aplicação Django, o que nos dá a flexibilidade de adicionar autenticação de utilizador, persistência de dados e pontos finais de API à medida que os requisitos evoluem. Servimos tudo através de uvicorn para capacidades assíncronas e nginx para fiabilidade de produção.

Several key features are still in development. Currently, when the system needs the GHG footprint per ton of a chemical’s production, it simply asks the user. This is a temporary solution while we build out a comprehensive database of production routes and their associated emissions. Think of it as a more sophisticated version of what SimaPro or GaBi offers, but specifically focused on chemicals and accessible through an API.

We’re also planning to integrate real-time data feeds for CO₂ prices, shipping routes, and chemical properties through live APIs. However, we’re prioritizing the orchestration layer first, then we’ll swap in these real data sources once the foundation is solid.

Outra área a melhorar é a memória de conversação. Atualmente, cada cálculo começa do zero, mas a adição de persistência de sessão para recordar consultas anteriores e construir sobre elas será simples, dada a nossa arquitetura atual.

Esta arquitetura serve como um excelente ponto de partida porque mantém uma clara separação de preocupações. Os LLMs tratam da interação humana confusa e da lógica de encaminhamento, enquanto as funções Python gerem os cálculos determinísticos. Isto significa que os especialistas do domínio podem validar e modificar os componentes matemáticos sem terem de tocar na pilha de IA.

The workflows remain highly debuggable thanks to LangGraph’s state management, which lets you inspect exactly what each agent decided and why. When something goes wrong, you’re not stuck debugging a black box. The system also supports incremental complexity beautifully—you can start with hardcoded values, gradually add database lookups, and then integrate real-time APIs, all while keeping the core workflow structure intact.

Perhaps most importantly, every calculation step becomes auditable through LangSmith logging. When someone inevitably asks “where did that 142.7 tons CO₂e come from?”, you can show them the exact inputs and formula used, creating a complete audit trail from question to final result.

O quadro geral

This is not just about carbon footprints. The pattern – use LLMs for natural language understanding and workflow orchestration, but keep the critical calculations in deterministic code – applies to any domain where you need to mix soft reasoning with hard numbers.

Supply chain risk assessment? Same pattern. Financial modeling with regulatory compliance? Same pattern. Any time you find yourself thinking “we need a smart interface to our existing calculations,” this architecture gives you a starting point.

O futuro é a inteligência híbrida, e não apenas o facto de se apostar tudo num LLM e esperar pelo melhor.

Built in Python with LangGraph, OpenAI APIs, Django. Co-programmed using Claude Sonnet 3.7 and 4, Chat GPT o3 (not “vibe coded”). Currently in active development. Try it at https://agents.lyfx.ai.